O. Henry: Confirmation Bias

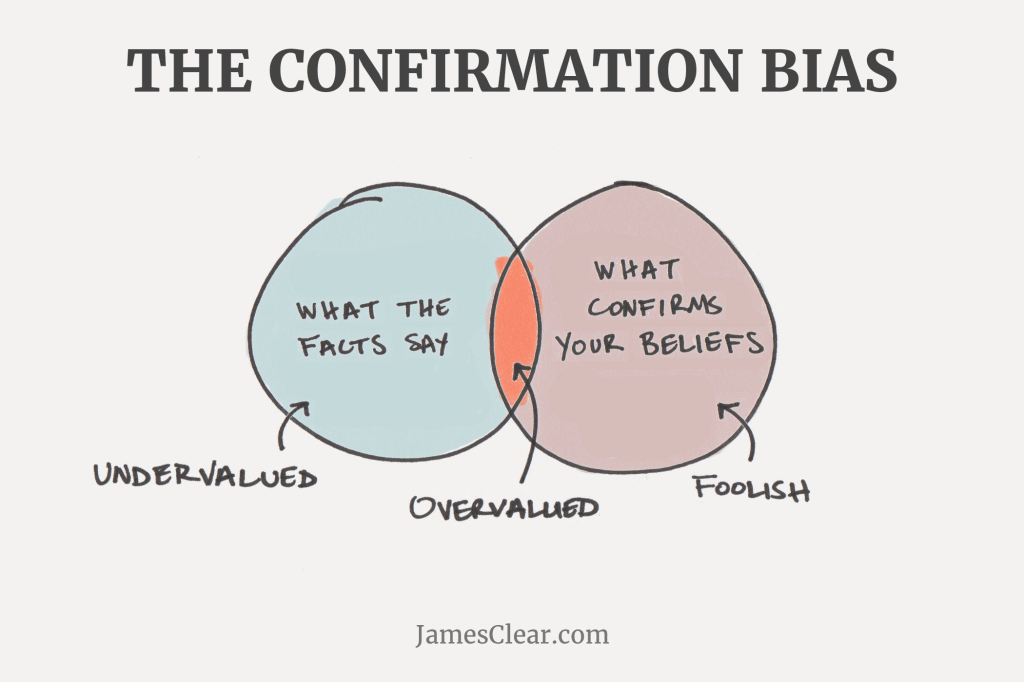

Artificial Intelligence is real, but there are side effects known as “AI sycophancy,” which is the tendency of our robot underlords to tell us only what we want to hear, as part of their mission to serve us. Typically artificial-intelligence tools give us nothing but positive reinforcement. All of this reinforcement leads to what is known as Confirmation Bias. In plain English, confirmation bias is the tendency to believe the informatiion and opinions that confirm what we already think, even if we are wrong. It feels so good to be right (and so uncomfortable to be corrected) that we go with the best sounding argument. And those corrections may mean that we have to change our beliefs, assumptions and behavior, OUCH!

In an article in the Wall Street Journal on March 23, 2026, Alexandra Samuel walked through some of the aspects of Confirmation Bias that can be the most insideous and she offered ways to avoid the pitfalls that our AI tools are leading us astray. Here is a synopsis of her thinking (and research) on the matter.[1]

Sincer there are a ton of AI pleaser tendencies, tactics such as having a “Team of Rival” AI tools make sense. We need to use AI to tame our human susceptibility to seek praise. Always take the advice of an AI tool with a grain of salt, otherwise it can be very easy to live in the AI echo chamber.

Here are Ms. Samuel’s suggestions for our AI use:

Ask Open Ended Questions — always ask questions in such a way that will keep several options on the table. That way we are less likely to receive an endorsement for your already constructed plan to power through.

Ask for Several Options — make a habit of asking for several options, whenever we are getting help on a decision. Ask for different outlines, ask for pros and cons of any decision, and, most importantly, add an option to “go in the opposite direction” of the typical inclination.

Demand Tough Treatment — our customers will beat us up for the best we can offer them, we should expect the same from our AI tools. Instead of succumbing to the heaps of praise, ask for insights on possible blind spots, potential risks, and areas around the corner than need deeper exploration. Definitely dig deep.

Fight for Facts — in its effort to please us, we need rigorous of our AI tools to find the right sources, not the easiest to find. Ask for source material that has been well researched and proven. One trick is to say to the AI tool that we want data that would hold up to the scrutiny of a university ethics board. Even there it is always wise to use our own fact checking methodologies.

Correcting our Assumptions — get your AI tool to point out any assumptions we have formulated that can lead to tunnel vision. Ask you AI tool to analyse your previous interactions and point out any inaccuracies, misinformation, or patterns of thinking. Are we spending time on tech fixes that are a waste of time? Perhaps we should step back from our AI inquiries and think on our own. How is that for an assumption?

Embrace the Discomfort — no one wants to hear that YOU ARE WRONG, however, it is a good idea to force our AI tools to make us uncomfortable. That tolerance for discomfort can lead to better reasoning and more openness to people around us, such as colleagues and customers, who challenge us. Shoot for straight talk with our AI tools, just as we would with a colleague or a potential client. That communication practice will increase our appreciation for the humans in our life.

=================================================

References: The article for this O. Henry post is from The Wall Street Journal…